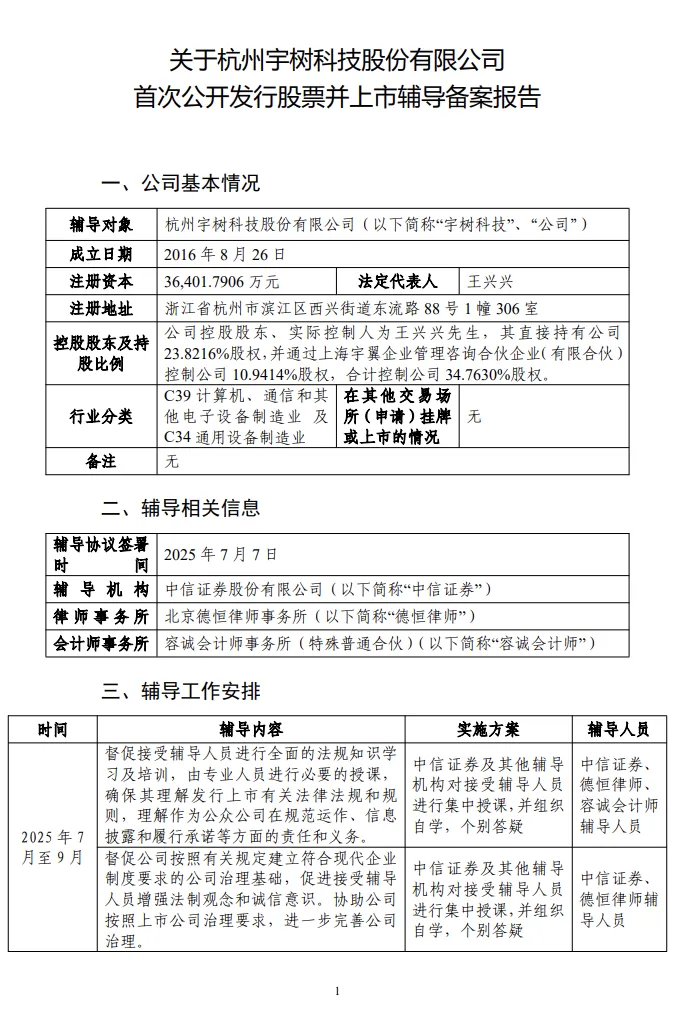

On August 7, 2025, OpenAI officially released the GPT-5 series of models, which represents the most significant product upgrade in the company's history. This release includes four versions: GPT-5, GPT-5 Mini, GPT-5 Nano, and GPT-5 Pro, each deeply optimized for different application scenarios, marking a new stage of development for AI technology.

Unified Intelligent System: A Revolutionary Breakthrough in Technical Architecture

GPT-5 is positioned by OpenAI as a "unified intelligent system", successfully integrating capabilities that were previously scattered across different models: the multimodal processing of GPT-4o, the deep reasoning of the o series, advanced mathematical calculation, and agent task execution. This architectural innovation eliminates the need for users to manually switch between different models. The system automatically selects the most suitable processing method based on task complexity through a real-time router.

In terms of core technical indicators, GPT-5 has achieved a comprehensive breakthrough:

Mathematical Reasoning: Achieved an accuracy rate of 94.6% in the AIME 2025 benchmark test without the need for external tools.

Code Capability: Scored 74.9% in the SWE-bench Verified test and 88% in the Aider Polyglot multilingual programming test.

Multimodal Understanding: Scored 84.2% in the MMMU benchmark test.

Professional Knowledge: Scored 88.4% in the GPQA general question answering test.

Detailed Analysis of the Four Versions

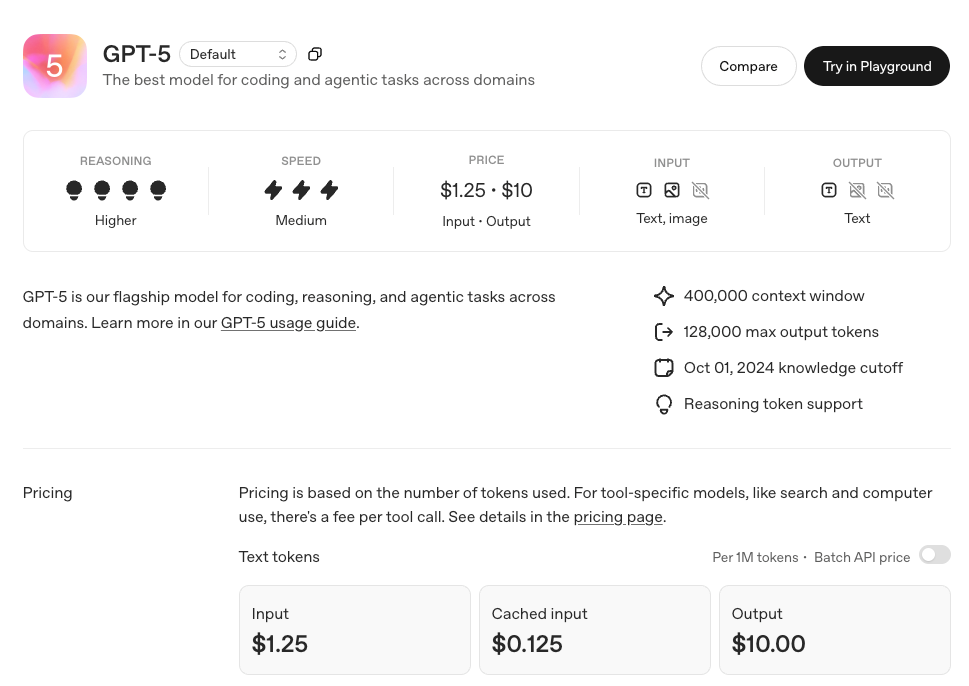

GPT-5 (Flagship Version): The Strongest Reasoning and Multimodal Capabilities

As the flagship product of the series, GPT-5 is designed for complex tasks and possesses the following core features:

Breakthrough in Reasoning Ability: Built-in Chain-of-Thought technology, which can decompose complex problems and solve them step by step. In internal tests, GPT-5 outperformed all previous models in complex tasks in over 40 professional fields.

Comprehensive Multimodal Support: Supports text, image, speech, and video processing, inheriting Sora's video generation technology. Users can upload content in various formats, and GPT-5 can generate corresponding responses or perform compound tasks, such as analyzing medical images or real-time translation of video content.

Agent-Based Task Execution: Supports complex operations such as automatic web browsing, generating complete software applications, and managing schedules. In the launch demonstration, GPT-5 generated a complete French learning web application with flashcards, quizzes, and progress tracking functions in just a few seconds based on a simple description.

Significant Reduction in Hallucination Rate: Through the "safe completion" technology, GPT-5's factual error rate is approximately 45% lower than that of GPT-4o, and when using the reasoning mode, the error rate is approximately 80% lower than that of the o3 model.

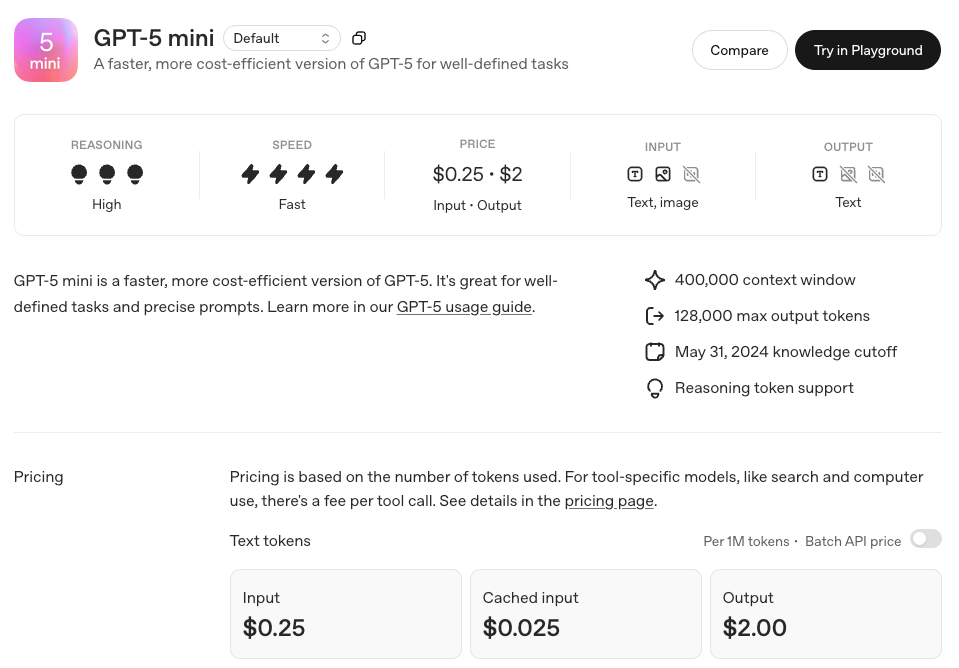

GPT-5 Mini: A Cost-Effective Lightweight Option

GPT-5 Mini is optimized for cost-sensitive applications, significantly reducing resource requirements while retaining core functions:

Supports chain reasoning tasks of moderate complexity.

Has text, image, and speech processing capabilities, with relatively limited video processing functions.

Can run on devices with lower computing power, making it suitable for small and medium-sized enterprises and individual developers.

tasks in over 40 professional fields.

Significant Reduction in Hallucination Rate: Through the "safe completion" technology, GPT-5's factual error rate is approximately 45% lower than that of GPT-4o, and when using the reasoning mode, the error rate is approximately 80% lower than that of the o3 model.

GPT-5 Mini: A Cost-Effective Lightweight Option

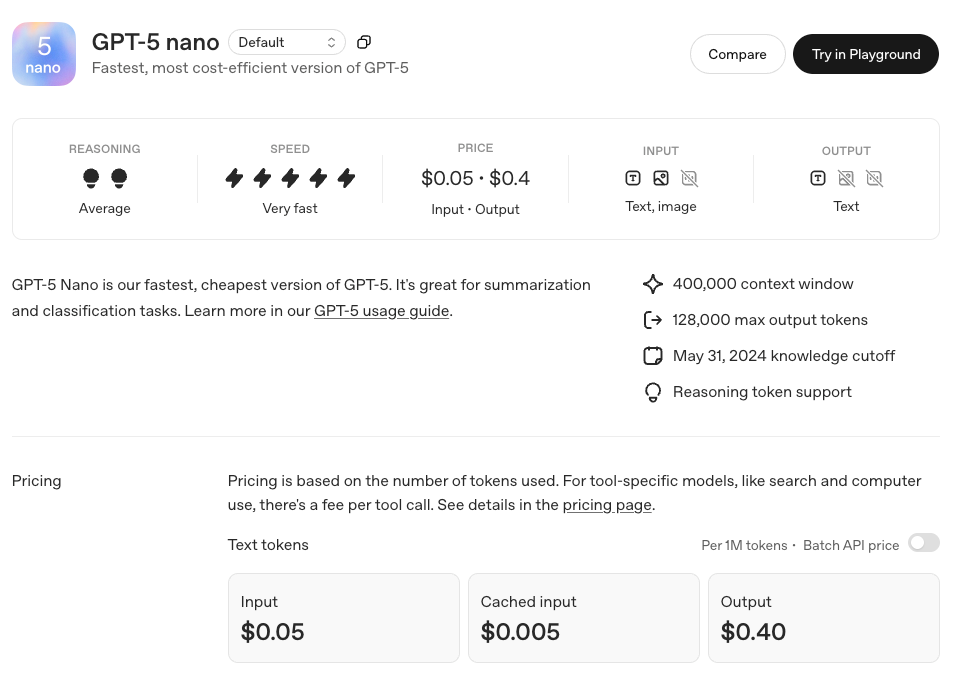

GPT-5 Nano is optimized for speed and low resource consumption, being the lightest version in the series:

Extremely low-latency response, designed specifically for real-time applications.

Can run on devices with only 16GB of memory, including MacBook or low-end servers.

Relatively simplified reasoning ability, mainly used for quick interaction and simple tasks.

Performs comparably to the o3-mini in general benchmark tests.

Applicable scenarios include mobile device applications, embedded systems, real-time translation, voice assistants, and other scenarios with high requirements for response speed.

GPT-5 Pro: Enhanced Version for Professional Users

GPT-5 Pro is a high-performance version designed for high-end users and enterprises:

Enhanced Reasoning Mode: Supports the "GPT-5Thinking" function, enabling in-depth reasoning on complex problems for a longer time to ensure extremely high accuracy.

Unlimited Access: Pro users have unlimited access to GPT-5 and exclusive access to GPT-5 Pro.

Professional Multimodal Capabilities: Performs excellently in tasks such as video processing and complex image analysis, scoring 46.2% in the HealthBench Hard medical benchmark test.

Deep Tool Integration: Seamlessly integrates professional tools such as search, Canvas, and code execution, providing a complete workflow experience.

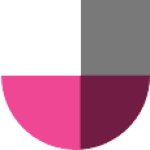

Pricing Strategy: The Largest-Scale Free Release in History

OpenAI has adopted an unprecedented open strategy, providing GPT-5 access to all user groups:

Free Users: Can use GPT-5 and GPT-5 Mini with usage limits. Once the limit is exceeded, the system will automatically switch to the Mini version.

Plus Users ($20/month): Enjoy higher usage limits, suitable for individual users and small teams.

Pro Users ($200/month): Have unlimited access to GPT-5 and GPT-5 Pro and can use the "GPT-5Thinking" mode.

Enterprise and Education Users: Will gain access within one week after the release and can use the GPT-5 Pro version.

API Pricing: $1.25 per million tokens for input and $10 per million tokens for output, targeted at professional developers.

Comprehensive Upgrade of User Experience

The GPT-5 series brings several user experience innovations:

Intelligent Model Selection: The system automatically selects the most suitable model version based on task complexity and user intent, eliminating the need for users to manually switch.

Personalized Interaction: Offers four preset personalities (Cynic, Robot, Listener, Nerd) and custom chat color options.

Enhanced Memory Capacity: Larger context windows can remember longer conversation histories, providing a more coherent interaction experience.

User-Friendly Design: Compared to GPT-4o, the new model reduces overly flattering expressions and uses fewer unnecessary emojis, making the interaction more natural.

Technical Architecture Innovation

The GPT-5 series may adopt a Mixture of Experts (MoE) architecture, significantly improving efficiency by reducing the number of active parameters. The training data is mainly in English text, focusing on the fields of STEM, programming, and general knowledge, with the knowledge cutoff date being June 2024. The entire training process was completed on NVIDIA H100 GPUs, consuming approximately 2.1 million GPU hours.

Competitive Advantages and Market Impact

In the current highly competitive AI environment, the release of GPT-5 is of great strategic significance. Facing strong competitors such as Anthropic Claude3.5Sonnet, xAI Grok4, and Google Gemini2.5Pro, OpenAI is consolidating its market position through a free opening strategy and a significant reduction in the hallucination rate.

According to statistics, there are currently 5 million paid users of ChatGPT's commercial products, including well-known institutions such as BNY Mellon, California State University, Figma, Intercom, and Morgan Stanley. The release of GPT-5 is expected to further accelerate the adoption of AI in enterprises and promote the digital transformation of various industries.

Industry Outlook and Challenges

The release of the GPT-5 series represents a new milestone in the development of AI technology, but it also faces some challenges:

Privacy and Security: Multimodal capabilities involve the processing of sensitive data such as medical images and personal conversations, making data protection a key issue.

Technical Impact: The increase in automation may have an impact on traditional job positions, requiring social adaptation and adjustment.

Performance Verification: Although OpenAI claims that GPT-5 possesses "doctoral-level intelligence", the performance of its real reasoning ability in practical applications still needs time to be verified.

Conclusion

The release of the GPT-5 series marks another major breakthrough for OpenAI in the field of AI. Through the differentiated layout of the four versions, OpenAI has successfully covered the entire spectrum of needs from individual users to corporate customers. This is not only a technological upgrade but also a comprehensive innovation in AI product strategy.

As GPT-5 becomes the new default model for ChatGPT, replacing previous versions such as GPT-4o and o3, users only need to open ChatGPT and enter questions, and the system will automatically process them and apply reasoning functions when necessary. The realization of this seamless experience indicates that AI technology is rapidly evolving from being a tool to an assistant, and from being auxiliary to collaborative.